Is Your Software Flying Blind?

What AI agent observability teaches us about software delivery — and why alignment becomes the harder problem as execution accelerates.

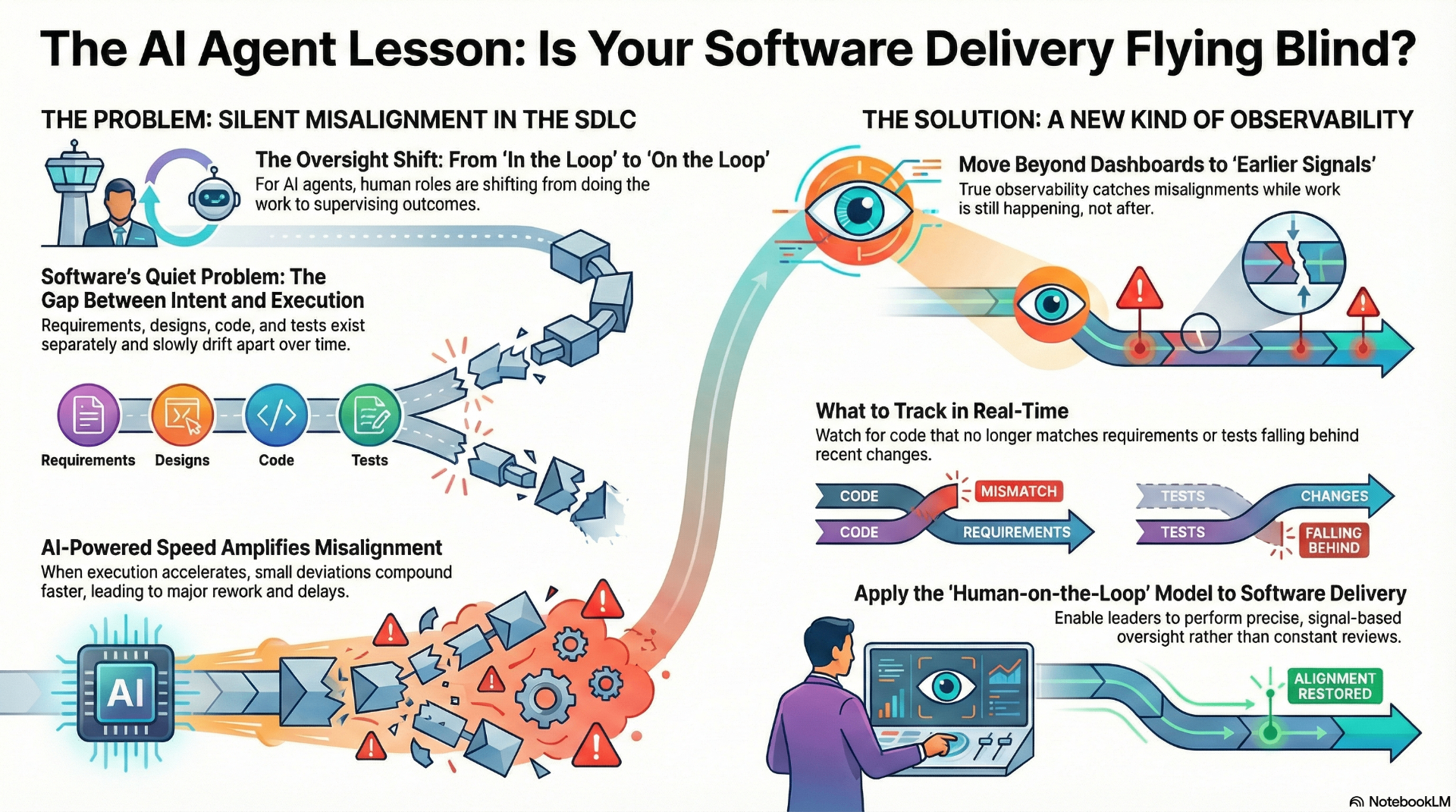

A recent Deloitte report on AI agents observes that as work shifts to autonomous systems, the biggest risk is no longer execution failure, but the inability to supervise outcomes at scale. Errors don't always show up as breakdowns — they propagate quietly until they surface as systemic risk.

Automation doesn't eliminate complexity, it relocates it. When machines execute faster than humans can track intent, the burden moves from doing the work to overseeing whether the work still means what it was supposed to mean.

The Quiet Problem Software Teams Already Live With

Requirements live in documents. Designs evolve in parallel tools. Code changes continuously. Tests, documentation, and releases follow on their own timelines. Each handoff relies on interpretation, and over time those interpretations drift.

When change accelerates, misalignment does not announce itself loudly. It accumulates quietly.

From Human in the Loop to Human on the Loop

Human roles are moving from doing the work to supervising the work. Constant monitoring does not scale. Manual checking everywhere creates noise and fatigue. Yet checking only at the end is too late. What's needed is precision — oversight that activates when something drifts.

The Missing Piece: Continuous Context

The challenge is that intent, execution, and outcomes are rarely visible together as they evolve. Recognizing slow drifts early, and knowing when to intervene, is quickly becoming the real work of modern software leadership.